How do we monitor and optimize our website presence and increase our search click-through rates? One approach is to adapt your content to include the terminology that people actually use in their “long-tail” searches — the longer, smaller volume searches. This article explores a technique to discover these ‘common’ phrases that people are already using to find your content, so you can look for opportunities for improvement.

The following analysis is performed in Microsoft Excel, using the Analytics Edge Add-in with the Google Search connector. Download the workbook: GoogleSearch-SearchEngineRankings-1-0.xlsx

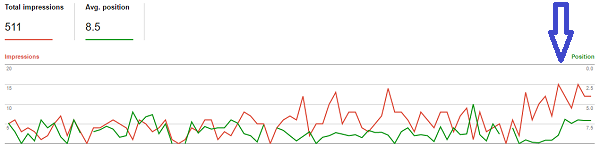

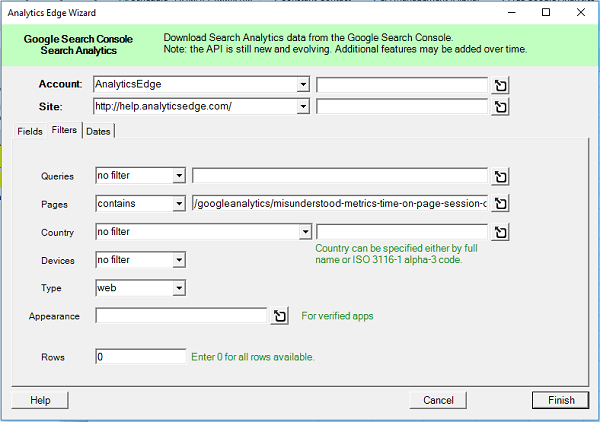

Although you could be looking at your entire website for opportunities, in this example I am going to look at improving the search performance of a specific article: Misunderstood Metrics: Time on Page/Sessions Duration. I start by downloading the search queries used to find that web page using the Google Search connector, and save it to a worksheet. The download pulls the Fields ‘query’, and uses a Filter for the page I want. [Note: if you are trying the companion workbook, you will have to configure the query before you can begin]

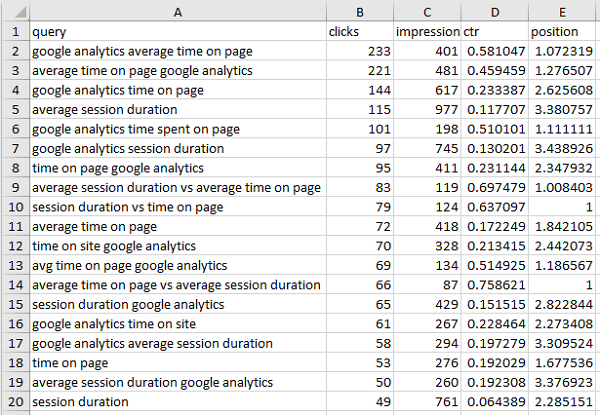

This results in a list of over 1100 unique search queries where the page appeared in the search results, many of which are using the some of the same words in the same order. I want to look at combinations of 2, 3, 4, 5 and 6 words to see if there are combinations that:

- are relevant to the article content

- show significant search volume

- do not perform as well as as other terms [opportunity!]

Analysis Approach

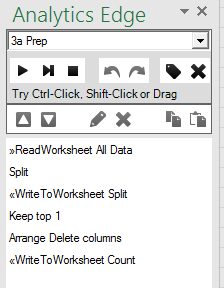

The process to do this is not really complicated, but there are a lot of steps involved, which makes it ideal for automation with Analytics Edge. We need all of the 2-word phrases in each query, plus all of the 3-word phrases, plus all of the 4-word phrases, and so on.

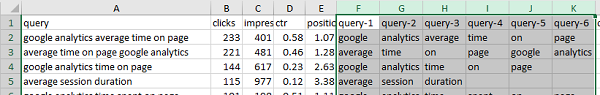

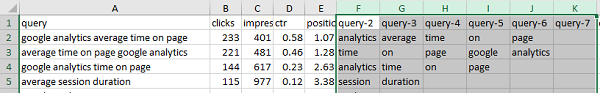

To get all the 2-word phrases, we would take the 1st and 2nd word, then the 2nd and 3rd, the 3rd and 4th, and so on. This can be accomplished by first splitting the queries into individual words, and then processing that list using a repeating macro. The Split function makes the first step easy, creating columns for each word in the queries, and it is used in a preparation macro before the main repeating macro.

Repeating Macro

In each pass through the repeating macro, we first Filter any rows where there are no words in either of the first 2 columns, then Join the two columns (with a space) to a new ‘phrase” column and use Append to Worksheet to build up a list of ‘2’-word phrases.

To process the next 2 columns, we can simply delete the first ‘word’ column (“query-1” in this case) and repeat the same macro again. Do it again for the next pair or words, and the next and the next. The results of each pass are appended to the ‘2’ worksheet, which builds up a complete list of all the 2-word phrases in the original queries.

Do It All Again

To get 3-word phrases, we simply redo the previous steps, but instead of grabbing just 2 words in a phrase, we get 3 words. Then the 4-word phrases. Then the 5, and the 6. The process is made easier if you copy the macro steps we did for the 2-word analysis and paste them into new macros for the 3-word analysis, and then edit the few steps that need changing. A few minutes later, the whole process is automated and can be run with a click of the Refresh All button.

Repeat…Forever?

That was a simple description of the process, but something has to tell the repeat macro how many times to run through the loop. The number of columns to be processed in the Split worksheet depends on how many words are found in the longest query, and we don’t know what that number is before we start.

That was a simple description of the process, but something has to tell the repeat macro how many times to run through the loop. The number of columns to be processed in the Split worksheet depends on how many words are found in the longest query, and we don’t know what that number is before we start.

To deal with that, I simply grab the first row of the results, delete the leading (fixed) columns, and write it to a temporary worksheet (called Count), transposed so the column names are listed vertically in a single column. The more words there are to be processed, the more rows there will be in the Count worksheet.

Those steps are added to the preparation macros, and the repeat macros use as the Count worksheet a counter for the number of loops to run.

Summarizing the Results

Having created worksheets with the 2, 3, 4, 5 and 6-word phrases, we could stop here and leave it to you to look for opportunities, but that is a lot of data to sift through, and that’s not much better than the original list of queries. I wanted a summary that helped me to see more about the phrases that have promise, and the queries behind them.

What is a ‘promising’ phrase? What would an ‘opportunity’ look like? You can come up with your own definition, but I wanted to see a few more metrics around the phrases, like how many unique queries included the phrase, and what was the total number of clicks and impressions:

- total clicks, for all queries that included the phrase.

- total impressions, for all queries that included the phrase

- count of unique queries that included the phrase

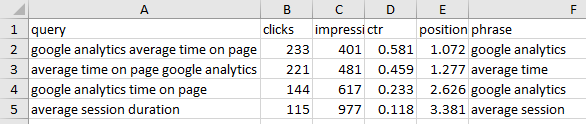

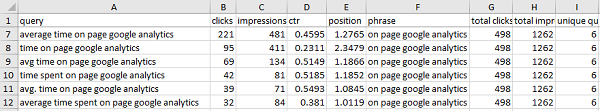

This lets me see phrases that appear in several queries, each with a small number of clicks but a larger aggregate total. For example, “on page google analytics” can be attributed to almost 500 clicks, but only 221 came from the most common query (“average time on page google analytics”).

These metrics can be obtained using the Lookup function, grabbing the Sum of the clicks and impressions for each phrase, and the Count is obtained in a similar way.

Removing Redundancy

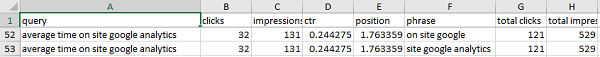

One thing became obvious from that analysis is that several different short phrases can appear in the same longer queries, yet not appear in any other query. This usually means that a longer phrase will have all the same metrics. For example, “on site google analytics” has 121 total clicks and 529 total impressions, identical to “on site google” and “site google analytics”.

Since longer is better, I used the Duplicates function to remove the redundancy of several shorter phrases appearing where a longer phrase with the same query and metrics is available.

Impressions per Query

The total clicks and total impressions only tell part of the story, though, so it is helpful to include a couple of summary metrics: I find the average and maximum number of impressions per unique query string are useful. This helps me to differentiate between a lot of queries with a lot of impressions each, versus a lot of queries of which one had a way more impressions that the rest.

- the average impressions / query for the phrase

- the maximum impressions / query for the phrase

Summary Findings

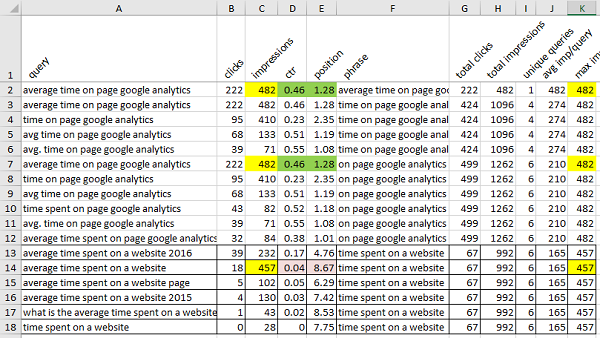

After adding the extra metrics, removing redundancy, and a quick sort (by max impressions/query), I can scan down the list looking for content optimization opportunities. In the first couple of highlighted examples below, the query with the maximum impressions/query already has a pretty good click-through-rate and position.

In the lower set, it shows there is an opportunity: the phrase “time spent on a website” is not ranking as well for the more common query “average time spent on a website“. The article also gets more clicks from a variant of the query, indicating an opportunity to ‘tune’ the article.

Reviewing the content showed it was a simple matter to work that specific phrase into the opening paragraph, changing “seeing how long people stayed on your site” into “seeing the time spent on your website”. By making little tweaks to your content like this over time, you will transform your content from a collection of your words, into a collections of words that people are searching for.

Results

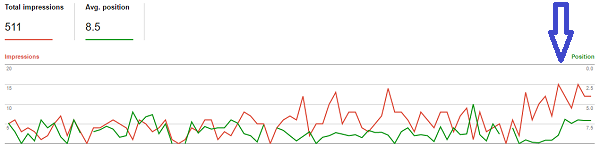

If the search algorithms smile upon us, your organic traffic goes up. In my case, there was a noticeable effect in both search impression volume as well as average position. Repeating the process on a weekly basis will uncover more long-tail phrases that can further ‘tune’ the article to answer more specific user questions, and breathe new life into an old article.