if you manage a large number of Search Console accounts and sites, this workbook will help you cope 2 big hurdles: the 2000-URL per day limit, and aligning which account to use for each URL.

if you manage a large number of Search Console accounts and sites, this workbook will help you cope 2 big hurdles: the 2000-URL per day limit, and aligning which account to use for each URL.

Inspired by an article by Glenn Gabe and a question by Christoph Cemper, this solution shows how easy it can be to build timesaving solutions with Analytics Edge macros…in minutes.

Getting a List of Accounts and Properties

To start, we need a list of accounts and the sites they have access to.

The Google Search connector has a Site Admin wizard that can give us a Site List for all the accounts you have added, but this list can contain domains as well as the properties themselves (referred to as siteUrl’s in the API). Having access to a domain does not necessarily mean you can access all the properties, so we Filter the list to only include ‘http’ siteUrl values.

As recommended by Glenn, you can create additional properties (siteUrls’s) for subdomains or subdirectories to work around the 2000-URL-per-day limit of the API. We want to process URLs in the most-specific level first, so we sort the list by the text-length of the siteUrl values, with the longest first.

You can also have access to a single property from multiple accounts, so we use the Duplicates function to keep the first occurrence of any siteUrl value.

Finally, we write the results to a ‘sites’ worksheet to use for processing.

Since this is a process we would typically do only once, or when new accounts or properties are added, we name the macro so it starts with an underscore (‘_get accounts/sites’). That tells the add-in to NOT run this particular macro when the Refresh All button is clicked. You need to select it in the Task Pane and click the Run/Play button in the Task Pane.

Getting Your Daily Fill of URL Inspection Data

Somehow you have collected a list of URLs you want to process. In my case, I used the connector’s Search Analytics query to get the URLs for the domains I manage and append them all the the ‘urls’ worksheet. You may have other techniques or there may be specific URLs you want to check or monitor.

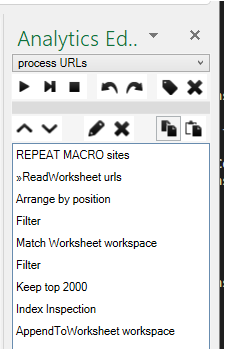

The ‘process URLs’ macro cycles through the list of accounts/properties, gets the related URLs from our worksheet, and gets results for the top 2000 that have not been previously processed. That may sound like a tell order, but when you break it down, it really is just a few simple steps.

Starting with a Repeat Macro function, we use the ‘site’s worksheet as the list to process, and the rest of the macro will repeat for each account/siteUrl entry.

Next, we grab the contents of our ‘urls’ worksheet and Filter for the URL’s that start with the siteUrl being processed. To prevent processing the same URL’s over again, we add a Match function to remove any that already exist in the ‘workspace’ worksheet (where we will save all the results).

At this point, there may actually be nothing to process, so we add a Filter function and check the option to ‘Stop macro if no data‘. When running, the Repeat Macro will simply abandon that loop and move on to the next account/property entry.

Now we have a list of URLs to process, but we need to limit it to 2000 so we don’t hit our daily limit — a simple Top/Bottom function added to keep the top 2000.

Finally we have everything we need to make the URL Inspection query. The Account and siteUrl values are saved by the Repeat Macro (as [Account] and [siteUrl]), and the list of URL’s are in the current table in memory (available as [url] which is the name of the column).

When the query finishes, the last step is to Append to Worksheet and add the new results to the end of our ‘workspace’ worksheet.

Run Daily

On a daily basis, add any new accounts or URLs to your lists, and click the Refresh All button on the Analytics Edge ribbon (or schedule it to run overnight). Any URLs not yet processed will be done – up to your 2000-per-day-per-property limit.

At any time, you can delete the contents of the ‘workspace’ worksheet and start over.

Download the workbook here:

https://help.analyticsedge.com/report/google-search-bulk-url-inspection-queries/